We use AI to help write our news articles and technical guides. We also don’t trust it. Here’s how we combine multiple AI tools with human expertise to create content that’s actually accurate.

You might have noticed we publish quite a lot of articles on technical topics—Windows updates, security threats, regulatory changes, industry news. What you might not know is that we use AI tools to help create them.

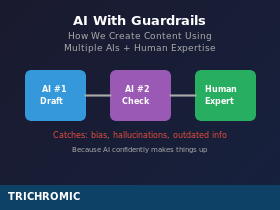

We’re not hiding this. We think it’s worth explaining, because the way we use AI is probably different from what you might assume. We don’t just ask an AI to write an article and publish whatever it produces. That would be a disaster. Instead, we’ve developed a workflow that treats AI as a powerful tool that requires constant supervision—like a very fast, very knowledgeable assistant who occasionally makes things up with complete confidence.

Here’s how it actually works, including the mistakes we’ve caught along the way.

The Basic Workflow

Our content creation process typically involves three parties: a human with domain expertise (that’s us), a primary AI for drafting (usually Claude), and a second AI for fact-checking (usually ChatGPT). The human stays involved throughout.

It works like this:

- We identify a topic based on something we’ve encountered in our work, something in the news, or a question clients keep asking.

- We brief the AI with context about what we want to cover, who the audience is, and any specific points that need including.

- The AI produces a draft which we review. We correct technical errors, adjust tone, add real-world examples from our experience, and remove anything that doesn’t sound right.

- For topics outside our expertise, we fact-check with a second AI —literally copying the draft into a different AI system and asking it to verify the claims. Different AIs have different training data and different blind spots.

- We make final corrections based on the fact-check, then publish.

This sounds straightforward, but the interesting part is what goes wrong—and how we catch it.

When AI Gets It Wrong

AI makes mistakes. Not occasionally—regularly. The mistakes fall into a few categories:

Confident fabrication. AI will state things as fact that simply aren’t true, with no hedging or uncertainty. Recently, while writing an article about age verification laws, the AI confidently stated that Colorado had passed a specific law. It hadn’t—the bill was still under consideration. The AI presented this as settled fact. A second AI caught the error during fact-checking.

Outdated information. AI training data has a cutoff date, and it doesn’t always know what it doesn’t know. It might describe a product feature that’s been discontinued, or miss a recent change to legislation. This is why current events and recent technical changes need extra verification.

Bias from training data. AI learns from human-written content—billions of documents, articles, and discussions. Those humans had biases, and the AI absorbed them. In one article discussing cybersecurity threats, the AI defaulted to mentioning a specific country when describing where attacks originate—reflecting a stereotype that’s common in online discussions but unfair and inaccurate. We caught it, but only because we were paying attention. The second time it happened, in a completely different conversation, we realised this was a pattern, not a one-off.

Plausible-sounding nonsense. Sometimes AI produces explanations that sound technical and authoritative but don’t match how things actually work. If you’re not an expert in the topic, these can slip through. We caught one AI explaining a monitoring process we supposedly do daily—except we don’t, because we don’t have the staff for that. It sounded reasonable, but it wasn’t true of our business.

Why Two AIs Are Better Than One

Different AI systems are trained on different data, at different times, by different organisations. They have different strengths and different blind spots.

When we ask a second AI to review a draft, it often catches things the first AI missed—or confidently stated incorrectly. The Colorado law example is a perfect case: the first AI was confident, the second AI flagged that the bill hadn’t actually been enacted and provided sources to verify.

This isn’t foolproof. Both AIs could share the same misconception if it’s widespread enough in their training data. But it catches a lot of errors that would otherwise make it into published content.

Think of it like getting a second opinion. Two doctors might both be wrong, but you’re less likely to miss something obvious if you ask both of them.

Why The Human Still Matters

The most important part of this process is the human who knows the subject.

When we write about Windows updates, Microsoft 365 security, or backup procedures, we’re writing about things we do every day. We know when the AI’s description doesn’t match reality because we’ve actually done the work. We know that patching happens on weekends, that we check systems on Sunday mornings before clients arrive on Monday, that certain Microsoft features require expensive licensing upgrades the AI doesn’t mention.

The AI doesn’t know our business. It doesn’t know that we use monthly Microsoft licensing rather than annual commitments, or that we get weekly internal reports to track renewal dates, or that we test RDP sessions by opening Word for five minutes after updates to check for crashes. These details come from experience, not from training data.

This is why AI can’t replace expertise—it can only amplify it. An expert using AI can produce more, faster, while maintaining quality. A non-expert using AI will confidently publish nonsense because they can’t tell when the AI is wrong.

Knowing When You Don’t Know

The trickiest situation is when we’re writing about something outside our core expertise.

The age verification legislation article is a good example. We know about IT infrastructure, but we’re not experts in American technology law. We couldn’t personally verify whether the AI’s claims about specific bills were accurate.

That’s precisely when the second-AI fact-check becomes essential. We explicitly told the fact-checking AI: “We don’t know this subject well enough to verify this ourselves. Please check these claims.” It came back with a detailed list of corrections: this bill is still under consideration, that fine structure is more nuanced than stated, this jurisdiction’s law works differently than described.

Knowing that you don’t know something is crucial. It tells you when to apply extra scrutiny.

What This Means For Your Business

We’re sharing this because many of our clients are curious about using AI in their own businesses—for content, for customer service, for internal documentation, for all sorts of tasks.

Our advice, based on doing this ourselves:

Use AI for things you understand. If you can spot when it’s wrong, you can use it safely. If you can’t, you’re gambling.

Cross-check with a second AI. It’s fast and it catches errors. Use paid subscriptions to get the best models—the free tiers are weaker and more likely to miss things. Just copy the output into a different AI and ask it to verify.

Never publish or send without human review. AI is a drafting tool, not an autopilot. Someone who knows the subject needs to read it before it goes out.

Be especially careful with facts, figures, and names. AI is most dangerous when it’s specific—confidently citing a law that doesn’t exist, a statistic it made up, or a person who never said what it claims they said.

Watch for bias. AI reflects the biases in its training data. If something feels like a stereotype or an assumption, it probably is.

The Bottom Line

AI is genuinely useful. It helps us produce more content, explain complex topics more clearly, and respond to developments more quickly than we could otherwise. This article you’re reading was drafted with AI assistance.

But AI with guardrails is very different from AI without them. The guardrails are: human expertise, cross-checking with multiple sources, and a healthy scepticism about anything the AI states confidently.

We’re not pretending our articles are hand-crafted by humans burning the midnight oil. We’re also not letting AI publish unchecked content with our name on it. We’ve found a middle ground that works: AI does the heavy lifting, humans provide the expertise and quality control, and a second AI helps catch what we might miss.

If you’re thinking about using AI in your business and want advice on how to do it sensibly—with appropriate guardrails—give us a call on 020 3327 0310. We’ve learned a lot by doing this ourselves, and we’re happy to share what works.